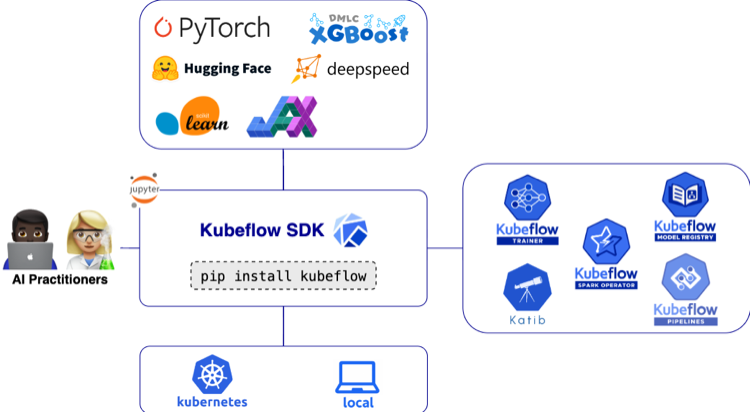

Kubeflow is the foundation of tools for AI Platforms on Kubernetes.

Just a little over one week ahead of KubeCon + CloudNativeCon EU 2026, the Kubeflow team is excited to ship Trainer v2.2. The v2.2 release reinforces our commitment to expanding the Kubeflow Trainer ecosystem – meeting developers where they are by adding native support for JAX, XGBoost, and Flux, while also delivering deeper observability into training jobs.

Key highlights of the v2.2 release include:

- First-class support for Training Runtimes for JAX and XGBoost, enabling native distributed training on Kubernetes. This marks a major milestone for the Trainer project, achieving full compatibility with Training Operator v1 CRDs: PyTorchJob, MPIJob, JAXJob, and XGBoostJob – now unified under a single TrainJob abstraction.

- Enhanced training observability, allowing progress and metrics to be propagated directly from training scripts to the TrainJob status. Hugging Face Transformers already integrate with the KubeflowTrainerCallback to automate this capability.

- Flux runtime support, bringing HPC workloads to Kubernetes and improving MPI bootstrapping within TrainJob.

- TrainJob activeDeadlineSeconds API, enabling explicit timeout policies for training jobs.

- RuntimePatches API, introducing a more flexible and scalable way to customize runtime configurations from the TrainJobs.

You can now install the Kubeflow Trainer control plane and its training runtimes with a single command:

helm install kubeflow-trainer oci://ghcr.io/kubeflow/charts/kubeflow-trainer \

--namespace kubeflow-system \

--create-namespace \

--version 2.2.0 \

--set runtimes.defaultEnabled=true

Bringing JAX to Kubernetes with Trainer

Kubeflow Trainer supports running JAX workloads on Kubernetes through the jax-distributed runtime. It is designed for distributed and parallel JAX computation using jax.distributed and SPMD primitives like pmap, pjit, and shard_map. The runtime maps one Kubernetes Pod to one JAX process and injects the required distributed environment variables so training or fine-tuning can run consistently across multiple nodes and devices.

- Multi-process CPU training

- Multi-GPU training using CUDA enabled JAX

- Data-parallel and model-parallel JAX workloads

- Massive scale TPU distributed training with ComputeClases

Start by following the Getting Started guide for Kubeflow Trainer basics and making sure you have Kubeflow SDK installed on your machine:

Use the jax-distributed runtime and initialize JAX distributed explicitly in your training script before any JAX computation:

from kubeflow.trainer import TrainerClient, CustomTrainer

def get_jax_dist():

import os

import jax

import jax.distributed as dist

<span class="n">dist</span><span class="p">.</span><span class="n">initialize</span><span class="p">(</span>

<span class="n">coordinator_address</span><span class="o">=</span><span class="n">os</span><span class="p">.</span><span class="n">environ</span><span class="p">[</span><span class="s">"JAX_COORDINATOR_ADDRESS"</span><span class="p">],</span>

<span class="n">num_processes</span><span class="o">=</span><span class="nb">int</span><span class="p">(</span><span class="n">os</span><span class="p">.</span><span class="n">environ</span><span class="p">[</span><span class="s">"JAX_NUM_PROCESSES"</span><span class="p">]),</span>

<span class="n">process_id</span><span class="o">=</span><span class="nb">int</span><span class="p">(</span><span class="n">os</span><span class="p">.</span><span class="n">environ</span><span class="p">[</span><span class="s">"JAX_PROCESS_ID"</span><span class="p">]),</span>

<span class="p">)</span>

<span class="k">print</span><span class="p">(</span><span class="s">"JAX Distributed Environment"</span><span class="p">)</span>

<span class="k">print</span><span class="p">(</span><span class="sa">f</span><span class="s">"Local devices: </span><span class="si">{</span><span class="n">jax</span><span class="p">.</span><span class="n">local_devices</span><span class="p">()</span><span class="si">}</span><span class="s">"</span><span class="p">)</span>

<span class="k">print</span><span class="p">(</span><span class="sa">f</span><span class="s">"Global device count: </span><span class="si">{</span><span class="n">jax</span><span class="p">.</span><span class="n">device_count</span><span class="p">()</span><span class="si">}</span><span class="s">"</span><span class="p">)</span>

<span class="kn">import</span> <span class="nn">jax.numpy</span> <span class="k">as</span> <span class="n">jnp</span>

<span class="n">x</span> <span class="o">=</span> <span class="n">jnp</span><span class="p">.</span><span class="n">ones</span><span class="p">((</span><span class="mi">4</span><span class="p">,))</span>

<span class="n">y</span> <span class="o">=</span> <span class="n">jax</span><span class="p">.</span><span class="n">pmap</span><span class="p">(</span><span class="k">lambda</span> <span class="n">v</span><span class="p">:</span> <span class="n">v</span> <span class="o">*</span> <span class="n">jax</span><span class="p">.</span><span class="n">process_index</span><span class="p">())(</span><span class="n">x</span><span class="p">)</span>

<span class="k">print</span><span class="p">(</span><span class="s">"PMAP result:"</span><span class="p">,</span> <span class="n">y</span><span class="p">)</span>

client = TrainerClient()

job_id = client.train(

runtime="jax-distributed",

trainer=CustomTrainer(func=get_jax_dist),

)

client.wait_for_job_status(job_id)

print("\n".join(client.get_job_logs(name=job_id)))

The jax-distributed runtime injects JAX_NUM_PROCESSES, JAX_PROCESS_ID, and JAX_COORDINATOR_ADDRESS into the environment, and all processes must call jax.distributed.initialize() exactly once before any JAX computation.

For more details, refer to the Kubeflow Trainer JAX guide for jax.distributed and SPMD primitives.

Bringing XGBoost to Kubernetes with Trainer

Running distributed XGBoost workloads on Kubernetes has traditionally required manual setup of communication layers, environment variables, and cluster coordination. With this release, Kubeflow Trainer introduces built-in support for XGBoost, enabling seamless distributed training with minimal configuration.

The new xgboost-distributed runtime abstracts away the complexity of setting up XGBoost’s collective communication (Rabit). Trainer automatically provisions worker pods using JobSet and injects the required DMLC environment variables, allowing workers to coordinate and synchronize during training. The rank 0 pod is automatically configured to act as the tracker, simplifying cluster setup even further.

This integration supports both CPU and GPU workloads out of the box. For CPU training, each node runs a single worker leveraging OpenMP for intra-node parallelism. For GPU workloads, each GPU is mapped to an individual worker, enabling efficient scaling across nodes.

For more information, please see this Notebook example and documentation guide.

Track TrainJob Progress and Expose Metrics

In this release, Kubeflow Trainer introduces a powerful new capability to automatically update TrainJob status with real-time training progress and metrics generated directly from your ML code. This enables key insights: such as percentage completion, estimated time remaining (ETA), and training metrics–to be surfaced through the TrainJob API, eliminating the need to manually inspect training logs.

How it works

When this feature is enabled (feature flag TrainJobStatus is required), Kubeflow Trainer starts an HTTP server that exposes endpoints for reporting training progress and metrics. Client applications can send updates to these endpoints, and the TrainJob controller will automatically reflect this information in the job status. Users can then easily access these insights through the Kubeflow SDK without needing to inspect logs.

To simplify adoption, we are collaborating with popular ML frameworks to integrate Kubeflow Trainer callbacks that automate this process. With these integrations, users don’t need to change anything to make it work!

For example, this functionality is already available in Hugging Face Transformers, where metrics are automatically reported when using the Trainer:

from transformers import Trainer, TrainingArguments

trainer = Trainer(model=model, args=TrainingArguments(...), train_dataset=ds)

trainer.train() # Progress automatically reported when running in Kubeflow

Future Plans

We have an exciting roadmap for this feature, including support for periodic, transparent checkpointing based on ETA, as well as integration with OptimizationJob for hyperparameter tuning jobs.

To learn more about this feature please see this proposal.

Bringing Flux Framework for HPC and MPI Bootstrapping

Setting up distributed ML training jobs using MPI can be very time consuming: from stitching together launcher-worker topologies to configuring SSH-based bootstrapping, there’s a lot of moving parts that require code on top of your training code. In v2.2, Kubeflow Trainer brings the Flux Framework – a workload manager that combines hierarchical job management with graph-based scheduling – to handle your HPC-style scheduling needs without the overhead that typically comes with it.

Flux uses ZeroMQ to bootstrap MPI, an improvement over traditional SSH, and also brings PMIx and support for more MPI variants. When a training job is submitted, an init container automatically handles Flux’s installation, meaning that you do not need to install Flux to your application container. The plugin also handles cluster discovery, broker configuration, and CURVE certificate generation to provide cryptographic security for the overlay network.

For teams whose workloads sit at the intersection of ML and HPC, Flux serves as a portability layer that enables running simulation alongside AI/ML workloads. Scheduling to Flux bypasses any potential etcd bottlenecks, and the limitations of the Kubernetes scheduler that require tricks to batch schedule to an underlying single-pod queue. Flux enables fine-grained control over where pods land, and is ideal when you are running simulation pipelines that feed into model Training. This integration also enables the use of Process Management Interface Exascale (PMIx) to manage and coordinate large-scale MPI workloads on Kubernetes using TrainJobs, something that was previously not possible.

Apply the Flux runtime and a TrainJob manifest. For example:

kubectl apply --server-side -f https://raw.githubusercontent.com/kubeflow/trainer/refs/heads/master/examples/flux/flux-runtime.yaml

kubectl apply -f https://raw.githubusercontent.com/kubeflow/trainer/refs/heads/master/examples/flux/lammps-train-job.yaml

After that, monitor the pods with kubectl get pods --watch, and inspect the lead broker logs with kubectl logs <pod-name> -c node -f . This also shows how to run the Flux cluster in interactive mode with flux-interactive.yaml, and then use kubectl exec and flux proxy to connect to the lead broker Flux instance and manually run LAMMPS inside the cluster.

The Flux runtime depends on the mlPolicy: flux trigger in flux-runtime.yaml, and you can customize the setup through environment variables such as FLUX_VIEW_IMAGE and FLUX_NETWORK_DEVICE. Binaries are installed under /mnt/flux, software is copied to /opt/software, and configurations are stored in /etc/flux-config. Related documentation includes the Kubeflow Trainer Getting Started guide, the Flux example manifests, and the Flux Framework HPSF project resources. A simple implementation has been done for this first go, and users are encouraged to submit feedback to request exposure of additional features. A demo video will be showcased at the KubeCon + CloudNativeCon 2026 EU booth for those that can attend.

You can learn more about this in our Flux Guide.

Resource Timeout for TrainJobs

Previously, TrainJob resources persisted in the cluster indefinitely after completion unless manually removed, which led to Etcd bloat, resource contention and no automatic garbage collection. A job could also get stuck or run indefinitely, wasting CPU/GPU capacity and reducing cluster efficiency. In v2.2, Kubeflow Trainer adds support for ActiveDeadlineSeconds API in TrainJob. This field lets users set a hard timeout (in seconds) for a TrainJob’s active execution timeline. When the deadline is exceeded, Trainer marks the TrainJob as Failed (reason: DeadlineExceeded), terminates the running workload, and deletes the underlying JobSet.

There’s a couple ways to specify the timeout limit of a job, the first one is by modifying the TrainJob manifest directly:

apiVersion: trainer.kubeflow.org/v1alpha1

kind: TrainJob

metadata:

name: quick-experiment

spec:

activeDeadlineSeconds: 28800 #Max runtime 8 hours

runtimeRef:

name: torch-distributed-gpu

trainer:

image: my-training:latest

numNodes: 2

More information about how to configure lifecycle policies for TrainJobs can be found in our TrainJob Lifecycle Guide

RuntimePatches API to override TrainJob defaults

In many distributed learning environments, multiple controllers can interact with the same TrainJob manifest, making ownership boundaries really important to preserve. The new RuntimePatches API replaces PodTemplateOverrides with a manager-keyed structure that makes it explicit on who applied what and when.

Each patch is scoped to a named manager and can target specific jobs or pods within the runtime, with both job-level and pod-level overrides supported. This means Kueue can inject node selectors and tolerations into the trainer pod without conflicting with another controller managing job-level metadata, and the full history of what was applied is preserved directly in the spec.

In the new TrainJob manifest, every manager owns its own entry, pod and job overrides are separate fields under that manager. Note that your manager field will be immutable after creation:

apiVersion: trainer.kubeflow.org/v2alpha1

kind: TrainJob

metadata:

name: pytorch-distributed

spec:

runtimeRef:

name: pytorch-distributed-gpu

trainer:

image: docker.io/custom-training

runtimePatches:

- manager: trainer.kubeflow.org/kubeflow-sdk # who owns this entry (immutable)

trainingRuntimeSpec:

template:

spec:

replicatedJobs:

- name: node

template:

spec:

template:

spec:

nodeSelector:

accelerator: nvidia-tesla-v100

Note that the RuntimePatches API cannot be used to set environment variables for the node, dataset-initializer, or model-initializer containers, nor to override command, args, image, or resources on the trainer container.

For a complete description of the API’s structure, restrictions and use cases, check out the RuntimePatches Operator Guide.

⚠️ This API introduces Breaking Changes!!

PodTemplateOverrides has been removed in v2.2. If you’re currently using it in your TrainJob manifests, you’ll need to migrate to the RuntimePatches API.

Breaking Changes

This release introduces a set of architectural improvements and breaking changes that lay the foundations for a more scalable and modularized Trainer. Please review the following when upgrading to Trainer v2.2:

Replace PodTemplateOverrides with RuntimePatches API

As mentioned above, PodTemplateOverrides has been replaced with RuntimePatches API to support manager-scoped customization and prevent conflicts when multiple controllers are patching the same TrainJob.

If you are using PodTemplateOverrides in your TrainJob manifests or SDK code, you will need to migrate to the manager-keyed RuntimePatches structure. See the RuntimePatches Operator Guide, and Options Reference for more information.

Remove numProcPerNode from the Torch MLPolicy API

The numProcPerNode field has been removed from the Torch MLPolicy. Process-per-node configuration is now handled directly through the container resources, so any TrainJob manifests or SDK calls that set numProcPerNode explicitly will need to be updated before upgrading to v2.2.

Remove ElasticPolicy API

The ElasticPolicy API has been removed from MLPolicy in Trainer v2.2. Elastic training is not yet available in this release, we are actively working on a redesigned implementation for future release. If your TrainJobs rely on elastic training configuration, please hold off on upgrading until that work lands.

Some TrainJob API fields are now immutable

Several TrainJob spec fields are now properly enforced as immutable after job creation. This rejects modifications to fields such as .spec.trainer.image on a running TrainJob upfront instead of having it silently fail at the JobSet controller level. If your workflows rely on updating these fields on a running TrainJob, those updates will now be rejected by the admission webhood. Please review your TrainJob update logic to ensure compatibility with our immutability policies in v2.2.

Release Notes

For the complete list of all pull requests, visit the GitHub release page: https://github.com/kubeflow/trainer/releases/tag/v2.2.0

Roadmap Moving Forward

We are excited to continue pushing Kubeflow as a state of the art platform for distributed ML training by making TrainJob manifests more observable and more performant across a wide range of hardware.

One area we’re particularly excited about is bringing Multi-Node NVLink (MNNVL) support for TrainJobs,

enabling them to treat GPUs across multiple machines as a single unified memory domain. For

large-scale training, this means significantly faster node-to-node communication compared to

standard network-based primitives and brings forth a new era of configurations that simply

weren’t practical before on Kubernetes. We are working closely with Kubernetes community to introduce first class support for Dynamic Resource Allocation (DRA) in TrainJobs.

We look forward to introducing Automatic configuration of GPU requests for TrainJobs that will

take the guesswork out of choosing the right resources. With intelligent methods guiding the

process, Trainer will choose appropriate resources automatically based on the TrainJob configuration.

This gives teams the power to plan experiments with confidence and trust that jobs use just the right

amount of compute.

Workload-Aware Scheduling (WAS) is also actively being integrated with the native Kubernetes Workload API for TrainJob to bring robust gang-scheduling support for distributed training without third party plugins. The integration will be available after Kubernetes v1.36, and we plan to extend it further to support Topology-Aware Scheduling and Dynamic Resource Allocation (DRA) as those APIs mature.

A full list of our 2026 roadmap can be found here.

Join the Community

The Kubeflow Trainer is built by and for the community. We welcome contributions, feedback, and participation from everyone! We want to thank the community for their contributions to this release. We invite you to:

Contribute:

Connect with the Community:

Learn More:

Headed to KubeCon + CloudNativeCon 2026 EU? Stop by the Kubeflow booth to see these features in action 😸🧊!!

📸

📸  📸

📸

📸

📸

📸

📸

Every infrastructure setup tends to follow the same pattern. You open the AWS console, configure a few options, and create a resource. It works as expected. But when the same setup needs to be recreated later, there is no clear record of what was done. The process becomes manual again, often inconsistent, and difficult to repeat reliably. This is the gap that Git-based workflows aim

Every infrastructure setup tends to follow the same pattern. You open the AWS console, configure a few options, and create a resource. It works as expected. But when the same setup needs to be recreated later, there is no clear record of what was done. The process becomes manual again, often inconsistent, and difficult to repeat reliably. This is the gap that Git-based workflows aim